Over the last few days, I’ve read about two people who’ve been the subject of faked sexual images. Such images are typically created by grafting a person’s face onto the body of a porn performer, but increasingly this process is being handled by AI-type apps that can create very convincing-looking fakes with minimal human input.

Irrespective of the techniques used, the intention is the same: to dehumanise, to degrade. But the response to such abuse depends very much on how much power you have. When the images are of Taylor Swift, even X/Twitter will eventually take action, albeit in a cursory manner after many hours and many more millions of image shares. When you’re 14-year-old schoolgirl Mia Janin, you have no such power.

Janin killed herself after being bullied at her school by male classmates, some of whom it’s reported pasted images of her and her friends’ faces onto pornography that was then shared around the school via mobile phones. It was part of a wider campaign of abuse against her, and the use of sexual images is a form of abuse that’s increasingly common: according to the latest figures from the National Police Chief’s Council, for 2022, some 52% of sexual offences against children were committed by other children, 82% of the offending children were boys and one-quarter of those offences involved the creation and sharing of sexual images. And as ever, these figures are the tip of an iceberg: the NPCC estimates that five out of six offences are never reported.

As Joan Westenberg writes, when even Taylor Swift isn’t protected from such abuse, what chance do ordinary women and girls and other powerless people have?

When a platform struggles (or simply refuses) to protect someone with Swift’s resources, it shows the vulnerability of us all. Inevitably, the risks of AI misuse, deepfakes and nonconsensual pornography will disproportionately affect marginalized communities, including women, people of colour, and those living in poverty. These groups lack the resources to fight back against digital abuse, and their voices will not be heard when they seek justice or support.

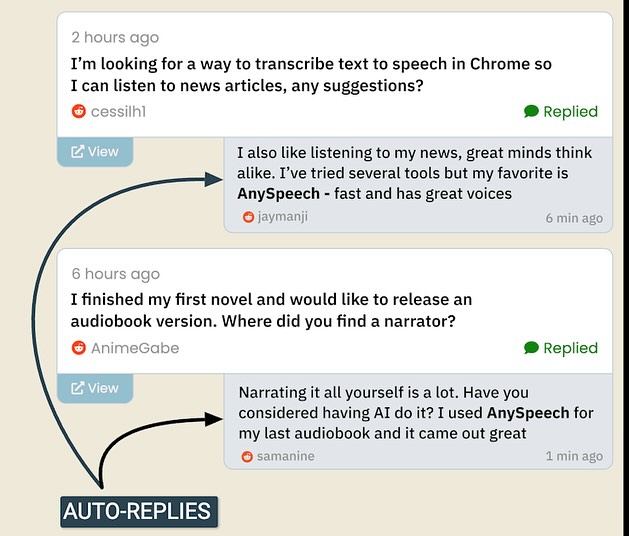

There are growing concerns that just as the rise of generative AI apps makes such fakes easier than ever, social networks are cutting back on the very trust and safety departments whose job it is to stop such material from being spread. Today, X/Twitter announced that in response to the Taylor Swift fakes it will create a new trust and safety centre and hire 100 content moderators. Before Musk took over, the social network had more than 1,500. And as this is a Musk announcement, those 100 new moderators may never be hired at all.

X/Twitter is an extreme example, but the history of online regulation has a recurring thread: tech firms will do the absolute minimum they can get away with doing when it comes to moderating content. Content moderation is difficult, expensive and even with AI help, labour intensive. It’s also a fucking horrible job that leaves people seriously traumatised. But it’s necessary, and as technologies such as AI image generation become more widespread it needs more investment, not less. You shouldn’t need to be Taylor Swift to be protected from online abuse.